When parsing a user utterance, we need to identify the user intention (i.e. what the user wants) but also extract the parameters in the text that will be needed to answer the request. These parameters are called entities in the NLP world. And the process to extract entities from the utterance is known as named-entity recognition or NER for short.

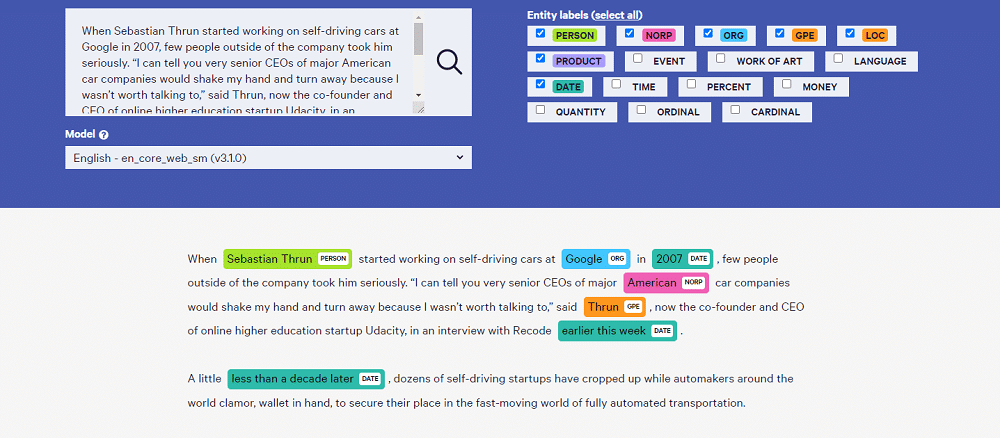

Entities could be names of places, people, dates, currencies, locations,…, as displayed in the featured image showing diplacy results on a sample text. But they could also be any list of values relevant to your domain (e.g. the types of pizzas your restaurant is offering). In this case, we talk about custom NER.

In our previous post, we explain how to build your own chatbot intent classifier, let’s now see how we can extend it to support custom NERs. Remember this Xatkit NLU engine we’re building is fully open source.

Representing custom NERs as part of our chatbot definition

The first step is to let bot designers declare the custom entities that should be recognized when running the chatbot. We have extended our dsl.py module with additional classes for this purpose.

Now an intent can include a number of entity parameters, each one referencing a custom entity. The reference is identified as a specific text fragment in the training sentences. Each custom entity is defined as a set of values plus a list of possible synonyms for each value. For those using Xatkit as a fully-fledged chatbot platform (and not just playing with this NLU server as a standalone component), this would correspond to the concept of Mapping Entities.

The next gist shows a simple example of a weather intent with a custom city NER. As part of the intent definition, we are saying that the fragment corresponding to mycity should be matched to a city value from the list of possible cities in city_entity. Obviously, if you’d like to match any city, it would be best to reuse a predefined list of worldwide cities instead of manually creating our own list but as a simple example, I’m sure you get the idea.

Matching custom NERs

This part is rather simple as we simply use a straightforward iteration on all the possible custom NERs and try to match their values with the user’s utterance and collect all the matches if any. Then a dictionary of the matches with the pairs

So if the user says something like “What’s the forecast for Barcelona“, we would report back that we were able to find a reference to a city_entity in the utterance (Barcelona in this case.)

Using custom NERs during the intent matching prediction

So far we’ve been able to identify entities but they are not part of the intent matching process. Should they influence the prediction? Is NER information important to strengthen the chances that a certain intent is matched?. We think so.

Therefore, if the parameter use_ner_in_prediction is set to true, before running the model prediction, we will replace any entity reference with the name of the entity type itself. This makes the user input resemble more the training sentences of the intents referencing that same entity, as now both will be using the entity name (instead of any of its concrete values). This additional coincidence will push the neural network to increase the probability of those intents being the match.

You could even go one step further when processing the combination of intent prediction + matched NERs to determine the best intent candidate. Instead of going just for the Intent with a higher probability, you could first filter out (or give more weight) to intents for which you were able to match the referenced intents. Imagine that the NLP Engine tells you that the best match for the user text is the “City weather forecast” intent but no city reference was matched in the process. So, you have to answer the forecast for a city that you don’t know. If the city parameter was optional (e.g. maybe you want to ask for the city in the next state of the conversation) then no problem. But if it wasn’t optional (i.e. all training sentences for the intent referenced the entity), choosing that intent will probably end up triggering an error when the Xatkit client looks for it to build the response.

Especially when two intents have very similar probability value, it may be a good to idea to choose the one for which we were able to match all the expected parameters.